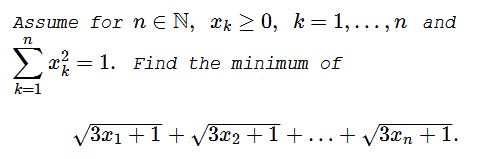

Optimization with Many Variables

Problem

Solution 1

Function $f(x)=\sqrt{3x+1}\,$ is concave on $[0,\infty),\,$ hence, by Karamata's inequality, applied to the sequences $\left(\displaystyle \sum_{k=1}^nx_k,\underbrace{0,\ldots,0}_{n-1}\right)\,$ and $(x_1,x_2,\ldots,x_n),\,$ gives

$\displaystyle \sum_{k=1}^nf(x_k)\ge f\left(\sum_{k=1}^nx_k\right)+n-1.$

Function $g(x)=\sqrt{x}\,$ is concave on $[0,\infty),\,$ hence, by Karamata's inequality, applied to the sequences $\left(\displaystyle \sum_{k=1}^nx_k^2,\underbrace{0,\ldots,0}_{n-1}\right)\,$ and $(x_1^2,x_2^2,\ldots,x_n^2),\,$ gives

$\displaystyle \sum_{k=1}^ng(x_k^2)\ge g\left(\sum_{k=1}^nx_k^2\right)$

so that $\displaystyle \sum_{k=1}^nx_k\ge 1.\,$ But $f\,$ is increasing, hence

$\displaystyle f\left(\sum_{k=1}^nx_k\right)+n-1\ge f(1)+n-1=n+1.$

It follows that $\displaystyle \sum_{k=1}^nf(x_k)\ge n+1.\,$ Note that, for the sequence $(1,\underbrace{0,\ldots,0}_{n-1}),\,$ $\displaystyle \sum_{k=1}^nf(x_k)= n+1,\,$ implying that $\displaystyle \min\left(\sum_{k=1}^n\sqrt{3x_k+1}\right)=n+1.$

Solution 2

Function $f(x)=\sqrt{3\sqrt{x}+1}\,$ is concave, $f(0)=1\,$ and $f(1)=2,\,$ implying that $g(x)=x+1\le f(x)\,$ on $[0,1].\,$ It follows that $\displaystyle \sum_{k=1}^nf(x_k^2)\ge\sum_{k=1}^ng(x_k^2)=n+1.\,$ Equality is attained for $(1,\underbrace{0,\ldots,0}_{n-1})\,$ and permutations.

Solution 3

$\sqrt{3x+1}\ge x^2+1,\,$ is equivalent to $3x\ge x^4+2x^2,\,$ or, else, to $x(1-x)(x^2+x+3)\ge 0.\,$ which is true. Thus,

$\displaystyle \sum_{k=1}^n\sqrt{3x_k+1}\ge\sum_{k=1}^n(x^2_k+1)=\sum_{k=1}^nx^2_k+n=1+n.$

Equality for $x_1=1,\,$ $x_2=\ldots=x_n=0\,$ and permutations.

Solution 4

The function $\sqrt{3x+1}\,$ is concave. It takes its minimum value for the maximum variance of $\mathbf{X}=(x_1,x_2,\ldots,x_n).\,$ The maximum variance is found for $\mathbf{X}=(1,0,\ldots,0)\,$ (or equivalent). Hence the minimum is $\sqrt{4}+(n-1)=n+1.$

Solution 5

We'll use the math induction on $n.\,$ For $n=2\,$ the problem is to minimize $f(t)=\sqrt{3\cos t+1}+\sqrt{3\sin t+1}.\,$ $f'(t)=\displaystyle -\frac{3\sin(t)}{2\sqrt{3\cos t+1}}+\frac{3\cos(t)}{2\sqrt{3\sin t+1}}.\,$ Rather obviously, $f'=0\,$ for $\displaystyle t=\frac{\pi}{4}.\,$ We can check that, for $t\in\displaystyle \left[0,\frac{\pi}{4}\right],\,$ $f'(t)\gt 0\,$ whereas, for $t\in\displaystyle \left[\frac{\pi}{4},\frac{\pi}{2}\right],\,$ $f'(t)\lt 0,\,$ implying that the minimum is attained on the boundary: $t=0\,$ or $\displaystyle t=\frac{\pi}{2}.\,$ Thus $F_2(x,y)\,$ attains its minimum at $(1,0)\,$ and $(0,1).\,$ The case where the constraint is $x^2+y^2=R^2\,$ gives similar result: the minimum is attained at $(R,0)\,$ and $(0,R).\,$

Now assume that $F_n(x_1,\ldots,x_n),\,$ subject to $x_1^2+\ldots+x_n^2=R^2,\,$ only attains its minimum at the points with a single nonzero coordinates, like $(R,0,\ldots,0).\,$ We shall prove that the same holds for $F_{n+1}(x_1,\ldots,x_n,x_{n+1}),\,$ subject to $x_1^2+\ldots+x_n^2+x_{n+1}^2=R^2.\,$

Note that $F_{n+1}\,$ does achieve its minimum on the sphere as the latter is a compact set. Assume that the minimum is attained at point $(p_1,\ldots,p_n,p_{n+1})\,$ with at least two nonzero components. WLOG, let these be $p_n\,$ and $p_{n+1}.\,$ Consider function $F_n(x_1,\ldots,x_n)\,$ restricted to the sphere $x_1^2+\ldots+x_n^2=R^2-p_{n+1}^2.\,$ By the inductive assumption, it attains its minimum at the points with a single non-zero component, e.g., $(0,\ldots,0,\sqrt{R^2-p_{n+1}^2}).\,$ This means that

$\begin{align} F_{n+1}(0,\ldots,\sqrt{R^2-p_{n+1}^2},p_{n+1})&=F_n(0,\ldots,\sqrt{R^2-p_{n+1}^2})+\sqrt{3p_{n+1}+1}\\ \le F_{n+1}(p_1,\ldots,p_n,p_{n+1}), \end{align}$

with equality only possible if just one of $p_1,\ldots,p_n\,$ is not zero. Thus, for $n\ge 3,\,$ the point of minimum always has at least one zero coordinate. So, assume that $F_{n+1}\,$ attains its minimum on the sphere $\displaystyle \sum_{k=1}^{n+1}x_k^2=R^2\,$ at $(0,p_2,\ldots,p_{n+1}).\,$ By dropping that coordinates, we observe that the function $F_n\,$ attains exactly same minimum on the sphere $\displaystyle \sum_{k=1}^nx_k^2=R^2.\,$ Since, by the inductive assumption, the latter is only possible at the points with a single nonzero coordinate, returning the omitted zero coordinate to its place, affirms that the function $F_{n+1},\,$ likewise, attains its minimum on the sphere $\displaystyle \sum_{k=1}^{n+1}x_k^2=R^2\,$ at the points with a single nonzero coordinate.

Acknowledgment

Leo Giugiuc has kindly posted at the CutTheKnotMath facebook page a problem by Hung Nguyen Viet, with a link to its original location at the mathematical inequalities facebook group. Solution 1 is by Leo Giugiuc; Solution 2 is by Daniel Dan; Solution 3 is by Tam Giáp; Solution 4 is by N. N. Taleb; Solution 5 is by Andrea Acquaviva.

![]()

|Contact| |Up| |Front page| |Contents| |Algebra|

Copyright © 1996-2018 Alexander Bogomolny74271467