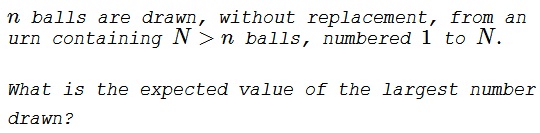

Expectation of the Largest Number

Problem

A Letter (and Solution 1)

Dear Alexander,

I am writing about your Symmetry Principle as you applied it to the problem of the expected number of cards to be dealt in order to get the first ace.

I came up with the probability puzzle (AB: see above) and had been working on it.

After tediously solving it by normal means, I realized that my problem was the same as your ace problem. Using the same Symmetry Principle logic as you did to solve your ace problem: The $n$ drawn balls divide, by rank, the remaining $N-n$ balls in the urn into $n+1$ groups of average size $\displaystyle \frac{N-n}{n+1}.$ The last group is from ball number $\displaystyle \left\lfloor\frac{n}{n+1}\cdot (N+1)\right\rfloor +1$ to ball number $N.$ The ball just before the first ball of the last group is the expected highest ball drawn:

$\displaystyle E(max) = \left\lfloor\frac{n}{n+1}\cdot (N+1)\right\rfloor.$

It occurred to me that a little more can be squeezed out of these problems with more use of symmetry. By symmetry, the expected minimum is given by:

$\displaystyle \begin{align} E(min) &= \left\lfloor 1 - \frac{n}{n+1}\cdot (N+1)\right\rfloor\\ &= \left\lfloor \frac{1}{n+1}\cdot (N+1)\right\rfloor, \end{align}$

which is the same as the answer to your ace problem. The formulas for expected minimum and maximum can be generalized to any rank:

$\displaystyle E(k) = \left\lfloor \frac{k}{n+1}\cdot (N+1)\right\rfloor.$

for $k = 1$ to $n,$ where $k$ is the rank from smallest to largest.

Solution 2

For $k$ to be the largest ball, choose $k$ and $n-1$ balls from the first $k-1$ balls. Thus, the required expectation value is

$\displaystyle \begin{align}

E&=\frac{\sum_{k=n}^{N} k {k-1\choose n-1}}{{N\choose n}}=\frac{\sum_{k=n}^{N} n{k\choose n}}{{N\choose n}} \\

&=\frac{n}{{N\choose n}}\left[{n\choose n}+{n+1\choose n}+\ldots+{N\choose n}\right] \\

&=\frac{n}{{N\choose n}}{N+1\choose n+1}=\frac{n(N+1)}{(n+1)}.

\end{align}$

Solution 3

1) Drawing without replacement, we can make use of the hypergeometric distribution for the distribution of $Z$, the max of an $n$ size sub-sample of $1,2,\ldots,N$; with mass function $\mathbb{P}(Z=z)=\phi(.)$:

$\displaystyle \phi (n,N,z)=\frac{\binom{N-z-1}{n-1}}{\binom{N-z}{n}}=\frac{n}{N-z}$

The expectation can be written as follows:

$\displaystyle\begin{align}\mathbb

{E}(Z)&=\sum _{i=0}^{N-n} (N-i) \phi (n,N,i) \prod _{j=0}^{i-1} (1-\phi (n,N,j))=\frac{n \left(n N-\frac{\Gamma (-N)}{\Gamma (-n-1) \Gamma (n-N)}+n+N+1\right)}{(n+1)^2}\\

&= \frac{n N}{n+1}+\frac{n}{n+1}+\underbrace{ \frac{n \sin (\pi n) \Gamma (n+1) \csc (\pi N) \sin (\pi (n-N)) \Gamma (-n+N+1)}{\pi (n+1) \Gamma (N+1)}}_{=0} =\frac{n (N+1)}{n+1}.

\end{align}$

2) Drawing with replacement, we have a discrete uniform distribution with cumulative $F(n)=\frac{x}{N}$. The cumulative probability of $Z$ is, by standard argument (all $x$ below $z$), $\displaystyle F(Z\geq z)=(\frac{z}{N})^n$.

The expectation becomes:

$\displaystyle \mathbb{E}(Z) =\sum _{i=1}^N i \left(\left(\frac{i}{N}\right)^n-\left(\frac{i-1}{N}\right)^n\right)$

Acknowledgment

The above is the content of an anonymous comment left at an earlier page. Solution 2 is by Amit Itagi. Solution 3 is by N. N. Taleb.

For $k$ to be the largest ball, choose $k$ and $n-1$ balls from the first $k-1$ balls. Thus, the required expectation value is

$\displaystyle \begin{align} E&=\frac{\sum_{k=n}^{N} k {k-1\choose n-1}}{{N\choose n}}=\frac{\sum_{k=n}^{N} n{k\choose n}}{{N\choose n}} \\ &=\frac{n}{{N\choose n}}\left[{n\choose n}+{n+1\choose n}+\ldots+{N\choose n}\right] \\ &=\frac{n}{{N\choose n}}{N+1\choose n+1}=\frac{n(N+1)}{(n+1)}. \end{align}$

1) Drawing without replacement, we can make use of the hypergeometric distribution for the distribution of $Z$, the max of an $n$ size sub-sample of $1,2,\ldots,N$; with mass function $\mathbb{P}(Z=z)=\phi(.)$:

$\displaystyle \phi (n,N,z)=\frac{\binom{N-z-1}{n-1}}{\binom{N-z}{n}}=\frac{n}{N-z}$

The expectation can be written as follows:

$\displaystyle\begin{align}\mathbb {E}(Z)&=\sum _{i=0}^{N-n} (N-i) \phi (n,N,i) \prod _{j=0}^{i-1} (1-\phi (n,N,j))=\frac{n \left(n N-\frac{\Gamma (-N)}{\Gamma (-n-1) \Gamma (n-N)}+n+N+1\right)}{(n+1)^2}\\ &= \frac{n N}{n+1}+\frac{n}{n+1}+\underbrace{ \frac{n \sin (\pi n) \Gamma (n+1) \csc (\pi N) \sin (\pi (n-N)) \Gamma (-n+N+1)}{\pi (n+1) \Gamma (N+1)}}_{=0} =\frac{n (N+1)}{n+1}. \end{align}$

2) Drawing with replacement, we have a discrete uniform distribution with cumulative $F(n)=\frac{x}{N}$. The cumulative probability of $Z$ is, by standard argument (all $x$ below $z$), $\displaystyle F(Z\geq z)=(\frac{z}{N})^n$.

The expectation becomes:

$\displaystyle \mathbb{E}(Z) =\sum _{i=1}^N i \left(\left(\frac{i}{N}\right)^n-\left(\frac{i-1}{N}\right)^n\right)$

Acknowledgment

The above is the content of an anonymous comment left at an earlier page. Solution 2 is by Amit Itagi. Solution 3 is by N. N. Taleb.

- What Is Probability?

- Intuitive Probability

- Probability Problems

- Sample Spaces and Random Variables

- Probabilities

- Conditional Probability

- Dependent and Independent Events

- Algebra of Random Variables

- Expectation

- Probability Generating Functions

- Probability of Two Integers Being Coprime

- Random Walks

- Probabilistic Method

- Probability Paradoxes

- Symmetry Principle in Probability

- Non-transitive Dice

![]()

|Contact| |Front page| |Contents| |Probability|

Copyright © 1996-2018 Alexander Bogomolny74261192